The latest W3C newsletter highlighted something called the Web Neural Network API, or WebNN. Basically, it’s a way to run AI models right in your browser without sending data to the cloud. Your computer or phone can handle it using its CPU, GPU, or even special AI chips (like the iPhone’s Neural Engine). That means faster results, offline use, and better privacy since your data doesn’t have to leave your device.

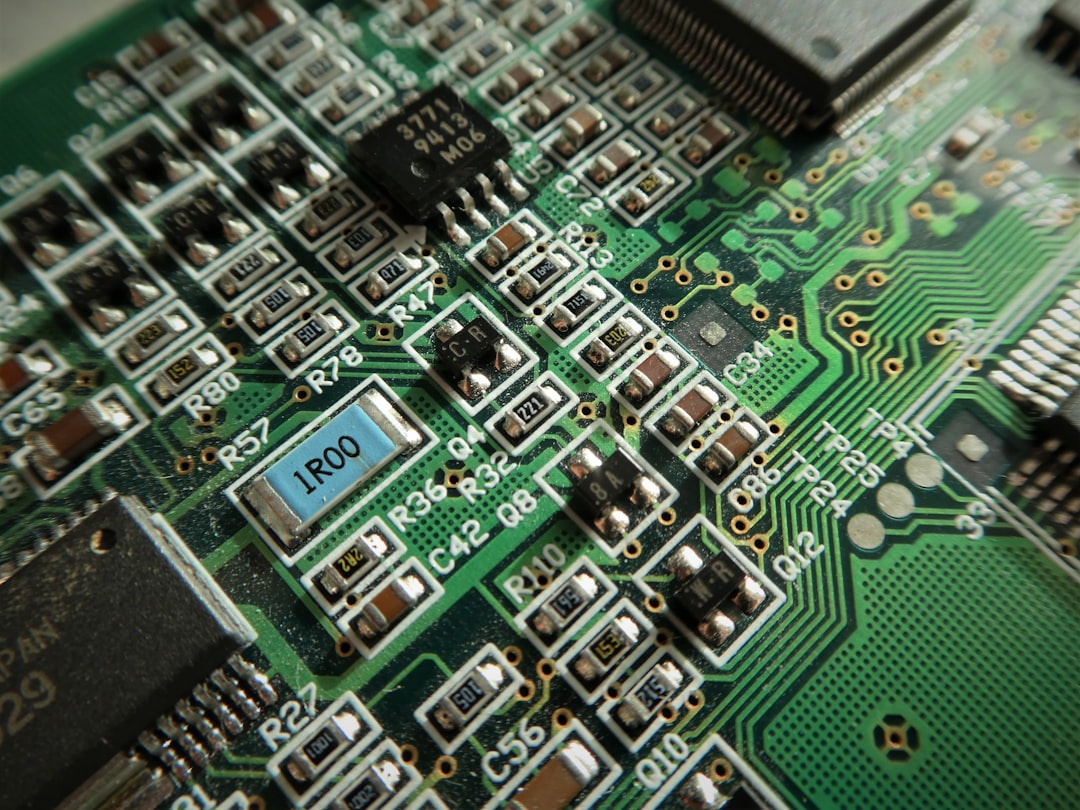

Right now, if developers want AI in the browser, they usually rely on stuff like TensorFlow.js or ONNX Runtime Web. That works, but it’s not always smooth. Big models take forever to load, run slow on low-end devices, and it’s tricky to keep everything private and efficient across different browsers.

WebNN makes it easier for developers to bring AI to the web by providing a standardized way to run models optimized for the user’s hardware. The models themselves are shipped with the app, which actually has some big advantages: you can use techniques like smaller or distilled models, quantized weights, and browser caching to keep things fast and lightweight, while still running entirely on the client.

Looking forward, WebNN could make a bunch of things possible in the browser, like:

- Smart text stuff: autocomplete, summarization, and analysis

- Real-time speech recognition or noise cancelling for calls

- Image and video tricks: object detection, background blur, and segmentation

- Small-scale AI generation: text or images right in the browser

- Personalized recommendations or predictive hints for users

- Scanning and understanding documents, like OCR

The cool part is that running these models on-device means you don’t have to constantly call a cloud service or pay for LLM usage. Everything can happen locally, which saves cost, reduces latency, and keeps your data private.

It’s still early days and support is limited, but it’s a glimpse at a future where web apps could do more AI on-device, without needing a cloud server for every little thing.